When it comes to self-driving cars, Tesla is perhaps the first name to spring to mind, having had some level of steering autonomy even back in 2016. However, around the same time, Nissan, too, had its own self-driving system called ProPilot, and today we’re in Japan to experience its latest iteration – AI-based ProPilot.

The company’s first ProPilot version rolled out in 2016 and was capable of autonomous driving on single-lane highways, where it kept the car centred in a single lane while maintaining vehicle-to-vehicle distance within a preset speed range. In 2019 came ProPilot 2.0 that could drive autonomously on multi-lane highways, overtake slower vehicles – driver authorised – and offer hands-off driving on a single-lane highway with one-way traffic.

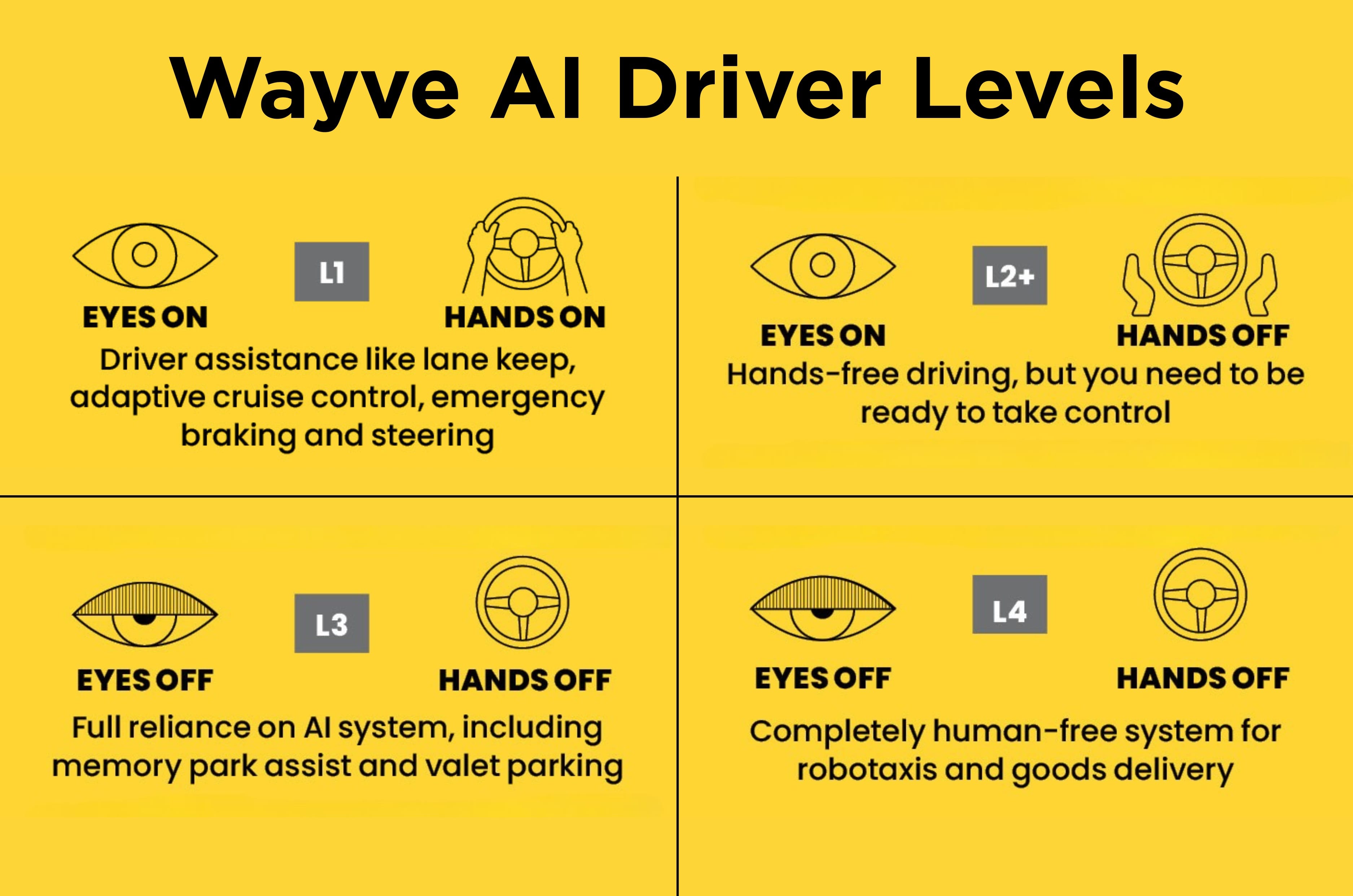

The system we are seeing today is perhaps the biggest leap forward thus far, and that’s down to the software running it. Where earlier versions used rule-based algorithms, the current system – developed by Wayve, a UK-based autonomous tech specialist – is a fully end-to-end, onboard AI system residing between sensor input and controller output.

Called Wayve AI Driver, the system does not need HD maps (including elevation data) to recognise its surroundings; maps are only for navigation. Wayve also says its system is flexible and can work with different combinations of sensors and camera selection, thus making it easy to integrate into a manufacturer’s existing production vehicle.

Cameras, radar and lidar working together

Nissan has partnered with Wayve, and the car being used is a regular Ariya EV but equipped with an extra suite of sensors and cameras; 11 cameras, 5 radars and 1 lidar. This stands in stark contrast to Tesla, which has done away with its sensor suite altogether, relying instead on camera input only. Tetsuya Iijima, executive chief engineer at the brand’s ADAS Development Department, says the company prefers its sensor-backed route as there are still ‘edge cases’ in which a camera-only system could get confused. “Shadows can confuse a human, so also a camera and AI-based system, but not radar and lidar. That will sense the physicality of the object,” says Iijima.

The lidar is housed along with a camera suite on a roof carrier, and though it’s retrofit, it is quite neatly done with the black finish merging nicely with the black roof and pillars of the Ariya. The five radars are located in the bumpers – one at the centre and two at the edges up front, while the rear has two radars at the outer edges of the car. “We’re well equipped to handle the crowded streets of Tokyo,” quips Iijima. I don’t tell him about Mumbai, but yes, Tokyo traffic, both automotive and pedestrian, is very dense.

How the AI behaves on the road

Being an eyes- and hands-off system, the car is fully capable of navigating on its own. But Japanese law prohibits autonomous cars running without a driver behind the wheel, so our backup driver today is chief engineer Iijima himself, who is beaming confidence from the driver’s seat. Setting off, he selects the route via the car’s touchscreen, hits start, and we’re off. We begin inside a hotel’s premises and make our way onto public roads via a traffic light, and I’m immediately reminded of the company’s claim that it can ‘recognise and predict how people and objects will move around the vehicle and reason the best path forward’.

Our signal goes green, and the path directly ahead is clear, but the Ariya does not move. There’s a woman hurrying across the zebra crossing who clearly has no intention of backtracking, and so the Ariya waits until she’s across. In older systems, the car would have likely moved forward and come to a halt again as soon as the pedestrian was in its path. AI Driver does not move, knowing “she is on a zebra crossing and is soon going to be in front of us,” says Iijima.

The system’s big difference is the ‘ability to reason’, says Iijima, and the AI is continuously building an enormous set of experiences just like a human driver, and that is what these tests are doing, training itself to drive a car. I have experienced autonomous cars before, including Nissan’s ProPilot 2.0 system. What’s different here is just how natural the movements all feel – not robotic, but predictive, especially in applying the brakes, where it feels like it’s sensing how fast a car is slowing or how quickly a human is crossing a street and adjusting the degree of change accordingly.

The rest of the drive is pretty straightforward and unremarkable, and all through, Iijima is busy speaking to us and answering our questions. At no point does he need to intervene; not once. The system is already at an advanced stage, says Iijima, and there’s a ‘Robotaxi’ pilot service scheduled to go live later this year, following which Nissan intends to offer the system on its production cars by 2027. Will it be called ProPilot 3.0? That, Nissan says, isn’t decided as yet. Given how much of a leap ahead this is, I’m guessing it’s going to be something else altogether.